Essential MySQL Tuning

TL;DR Make sure you increase innodb_buffer_pool_size from the stock value!

One of our client's servers (that we inherited from another developer) has been having some issues with high CPU load. Upon investigating, we found that MySQL was frequently spiking to use 40-50% of the server's total CPU power. The level of traffic on the site didn't seem to explain a heavily-loaded database.

Digging in to the MySQL configuration, we found that the innodb_buffer_pool_size variable was set to the default value. (Check this by running "show variables like 'innodb_buffer_pool_size';" at the MySQL prompt.) This variable controls how much RAM MySQL can use to cache content, and it is usually the most important knob to adjust when tuning MySQL. Since this was an older server, that default was 8MB!* That's right: on this 4GB server, only 8MB was devoted to caching database content in RAM! This meant that MySQL had to fetch almost all of the data from disk for every single query, which is at least one order of magnitude slower than fetching it from RAM.

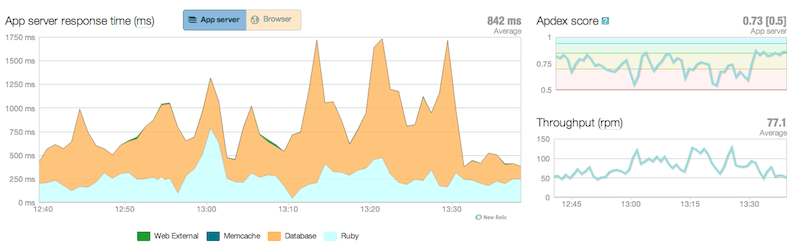

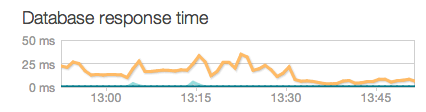

Next, we had to decide how much RAM to allocate to the database. Ideally, the database should be allocated enough RAM to cache the entire database, but since this server was also running Apache, Passenger, and Rails, we decided to allocate 1GB of RAM to MySQL, or enough to cache about a quarter of the database. We made this change in /etc/my.cnf, and restarted MySQL. You can see the results here:

The flat part towards the right of the graphs shows how the response time was lowered and became more consistent after the change.

The server load is much lower now and the site is more responsive. This should result in happier users and more pageviews! We're a bit sad that this poor server had to struggle unnecessarily for so long, but we're happy that we were able to breathe new life into it!

* 8MB was the default as of MySQL 5.0. It was increased to 128MB in 5.1, which is better, but still usually inadequate.